Artificial intelligence holds so much promise in so many fields. But the biggest challenge has been finding potentially lifesaving solutions quickly enough.

Researchers in the Technion’s Andrew and Erna Viterbi Faculty of Electrical Engineering have developed a platform that can accelerate the learning process of AI systems a thousandfold.

The Growth of AI

Artificial intelligence has grown by leaps and bounds in recent years due to models of deep neural networks (DNNs) — sets of algorithms designed to recognize patterns. Inspired by the human brain, these DNNs have been enormously successful in tackling complex tasks such as autonomous driving, natural language processing, image recognition, and even the development of innovative medical treatments.

Like our own brains, DNNs learn by example. This requires large-scale computing power and is carried out on computers with massive graphic processing units (GPUs). GPUs consume considerable amounts of energy and process information more slowly than the DNNs, hindering the learning process.

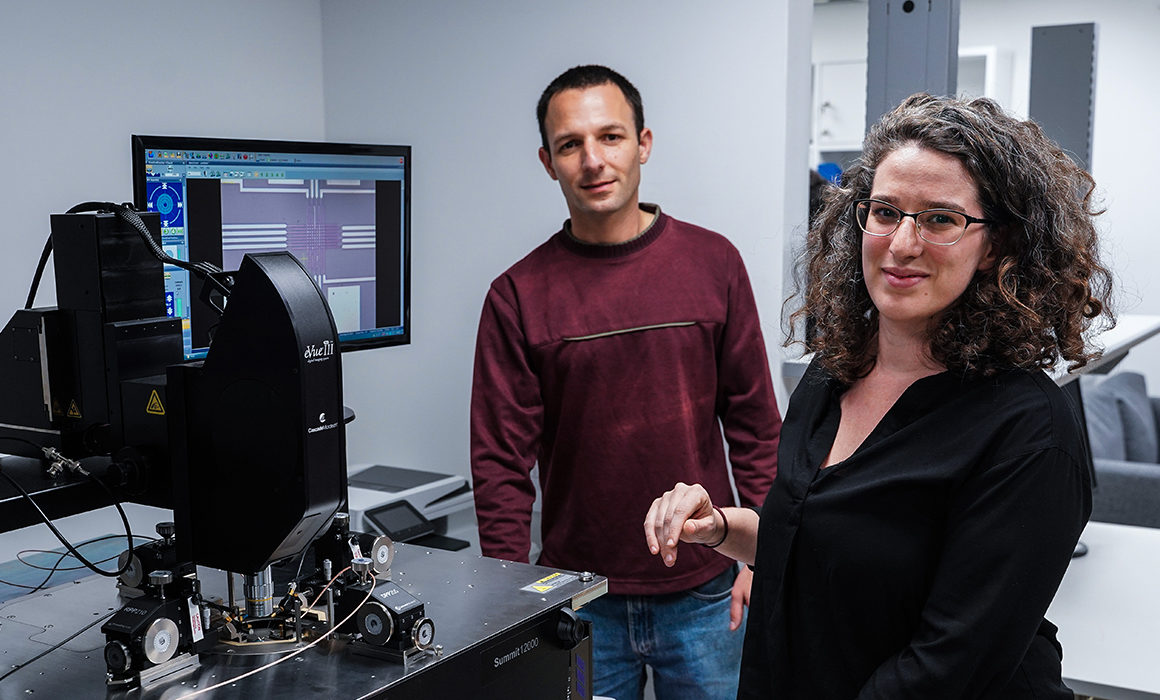

Professor Shahar Kvatinsky and doctoral student Tzofnat Greenberg-Toledo, together with students Roee Mazor and Ameer Haj-Ali, realized that to fully unlock the potential of DNNs, they needed to design hardware that would be able to keep up with the fast-paced activity of neural networks.

Supercharging AI Learning

Prof. Kvatinsky and his research group developed a hardware system specifically designed to work with these networks, enabling DNNs to learn faster and with less energy consumption. Compared to GPUs, the new hardware’s calculation speed is 1,000 times faster and reduces power consumption by 80%!

The research is still in its theoretical stage, but the team has already demonstrated how the hardware system will work via simulation. The team plans to continue developing it into a dynamic, multipurpose hardware that will be able to adapt to various algorithms, instead of requiring a number of different hardware components.